AI Coding Agents Are Optimised for a Finish Line That Doesn't Exist in Real Software

Source: arXiv

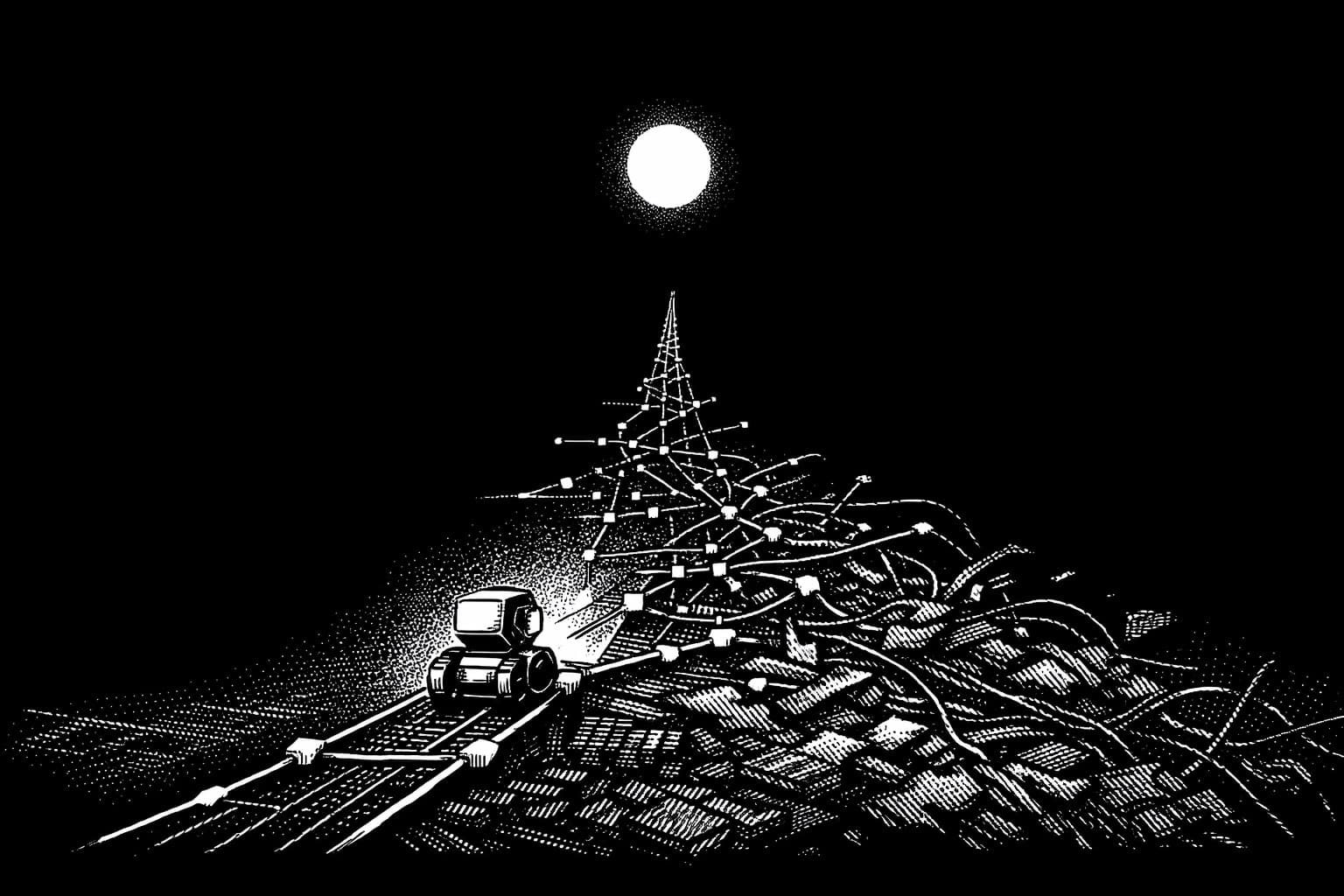

A new benchmark out of Sun Yat-sen University and Alibaba asks AI coding agents to maintain a codebase over eight months of real development history — not fix a single bug, not resolve one GitHub issue. Evolve the code across 71 commits, keep the tests green throughout, and don't break what was working before.

Most AI coding benchmarks test whether you can win a sprint. SWE-CI tests whether you can run the race that software actually is.

What holds up: AI agents are bad at regression control, and it compounds. The earlier the brittle fix, the more expensive it becomes. Other benchmarks can't see this because they don't look past the first finish line. EvoScore — which weights later iterations more heavily than earlier ones — is the first metric I've seen that structurally punishes the trade-off every leaderboard currently rewards.

What doesn't: the benchmark caps each iteration at five requirements. The paper calls this realistic CI discipline. It isn't — it's a tidiness constraint that limits how much compounding pressure builds between iterations. Real maintenance doesn't arrive in five-point increments. The regression numbers are probably understated compared to what agents would produce under genuine development chaos, which makes the already-concerning results feel artificially contained.

Worth reading if you're making decisions about AI in production software pipelines. The scores matter less than the question the benchmark finally asks.

Stay current weekly

Get new commentary and weekly AI updates in the AI Primer Briefing.