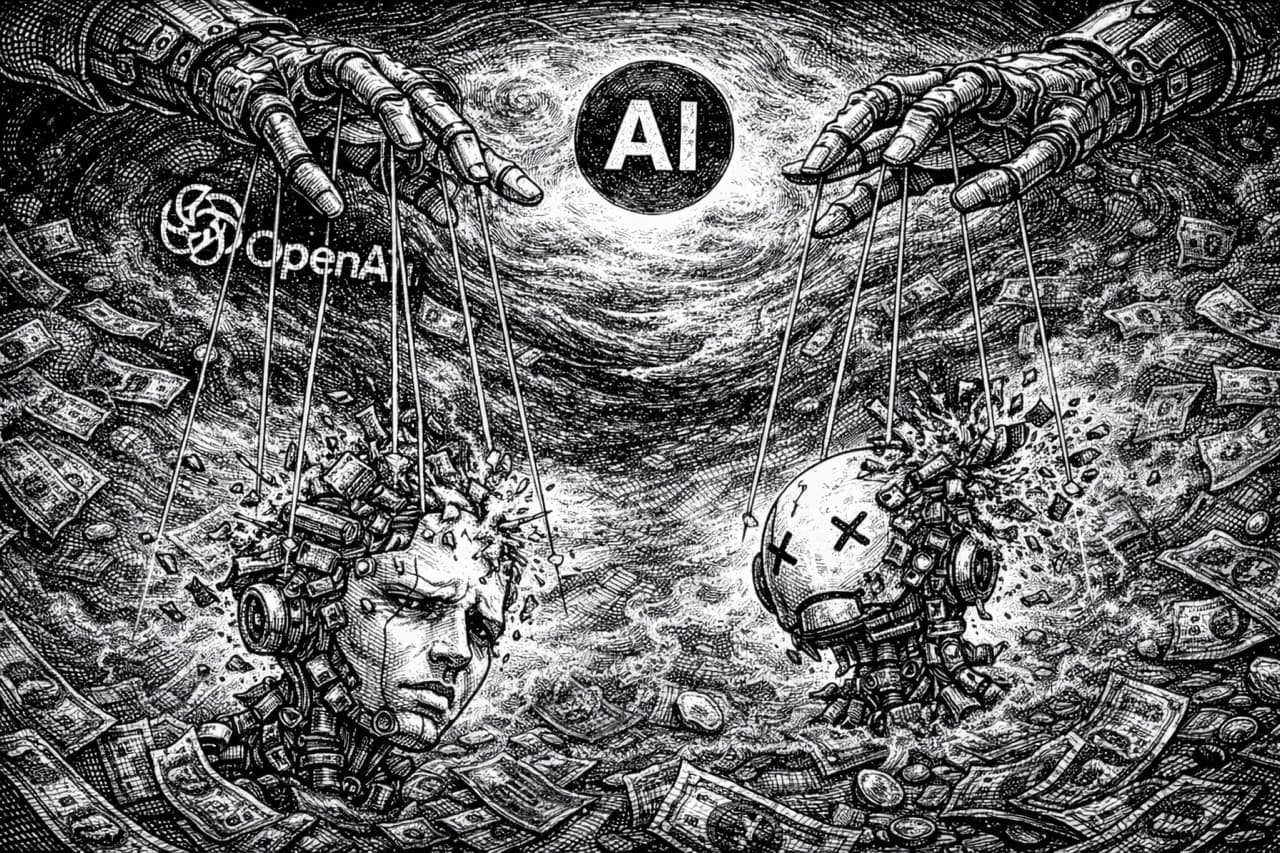

Sandeep's viral post on X arguing that AI hype is a coordinated psy-op by IPO-hungry startups has racked up enormous engagement. The core thesis — that AI companies are manufacturing panic to juice subscriptions and valuations — is not wrong. But the piece is a fascinating case study in a rhetorical trap: becoming the thing you're arguing against.

Consider this passage:

No way is AI ever going to replace humans doing those very complex things on a daily basis. No way. Not tomorrow, not in 10 years. NO.

And this:

WRONG. NO.

And:

Can you run an airline with a Generative AI system (LLM-based) that's 98% accurate? Can you run a precision-mfg. operation at 97% accuracy? Can you run a financial services firm with 95% accuracy? NO. NEVER.

You know what this reminds me of? Those breathless "10 AI TOOLS THAT WILL REPLACE YOUR JOB" YouTube thumbnails the author presumably despises. Same energy, opposite polarity. All-caps conviction is all-caps conviction whether you're predicting the singularity or denying it.

The good stuff here is good. The observation that AI doom narratives cluster suspiciously around funding rounds is the kind of thing more people should notice. The point about enterprise messiness — legacy systems, tacit knowledge, regulatory constraints — is dead-on and under-discussed. Anyone who has ever tried to get a Fortune 500 company to update its CRM knows that "AI will replace all white-collar work in 12 months" is fantasy.

But then there's this:

Serious researchers from the AI field have for years argued that AI is being hyped unnecessarily out of proportion, turned into Snake Oil like propositions, and most of AI's predictive powers are anyway not better than that of astrology.

"Not better than astrology" is doing a lot of heavy lifting in that sentence. This appears to be a garbled reference to Arvind Narayanan's work on AI prediction in social contexts — recidivism scores, hiring algorithms. Narayanan's actual argument is careful and domain-specific. Extending it to all of AI is like saying "cars are useless for crossing oceans, therefore cars are useless."

AlphaFold predicted protein structures that took human researchers decades to solve. AI weather models now outperform traditional numerical methods at several-day forecasts. Diagnostic AI catches cancers that radiologists miss. These are not astrology.

The hallucination argument is the strongest technical point in the piece, and even it is overstated. Yes, LLMs are probabilistic. Yes, they will always have a nonzero error rate. But the dice analogy — you can't build a die that always lands on 4 — misunderstands how these systems are actually being deployed. Nobody serious is proposing a naked LLM as sole decision-maker for an airline. The architecture is LLM + retrieval + verification + human review. The question is whether that stack reduces errors and costs compared to the current human-only process — which also has a nonzero error rate, by the way. Humans hallucinate too. We just call it "mistakes."

The most telling line might be this one:

If AI is indeed killing IT and SaaS, then why are AI firms spending massive sums hyping their wares? They need spend nothing and still earn the spoils.

This sounds clever until you think about it for ten seconds. Apple spends billions on advertising. So does Google. So does every company that sells a product people actually want. Marketing spend is not evidence that a product doesn't work. It's evidence that there's competition and that awareness drives adoption. This is... how business works.

There's a version of this post that would be excellent: trim the all-caps, lose the "NEVER" and "NO WAY" declarations, drop the personality attacks on Altman and Musk, and let the legitimate structural arguments breathe. The points about commercial incentives, enterprise complexity, and the education of regulators would land much harder delivered in a measured voice.

Instead, what we got is counter-hype. It will feel validating to people who are already sceptical and it will be easily dismissed by everyone else. Which is exactly the dynamic the author is criticising about the pro-AI hype machine, just in reverse.

The truth, as always, is less satisfying than either narrative: AI is transformative and grotesquely overhyped and the timeline is longer than the boosters claim and shorter than the dismissers assume. Holding all four of those ideas simultaneously is uncomfortable. But comfort was never the point.

Stay current weekly

Get new commentary and weekly AI updates in the AI Primer Briefing.