The Most Useful Insight in This Piece Is the One It Almost Skips Past

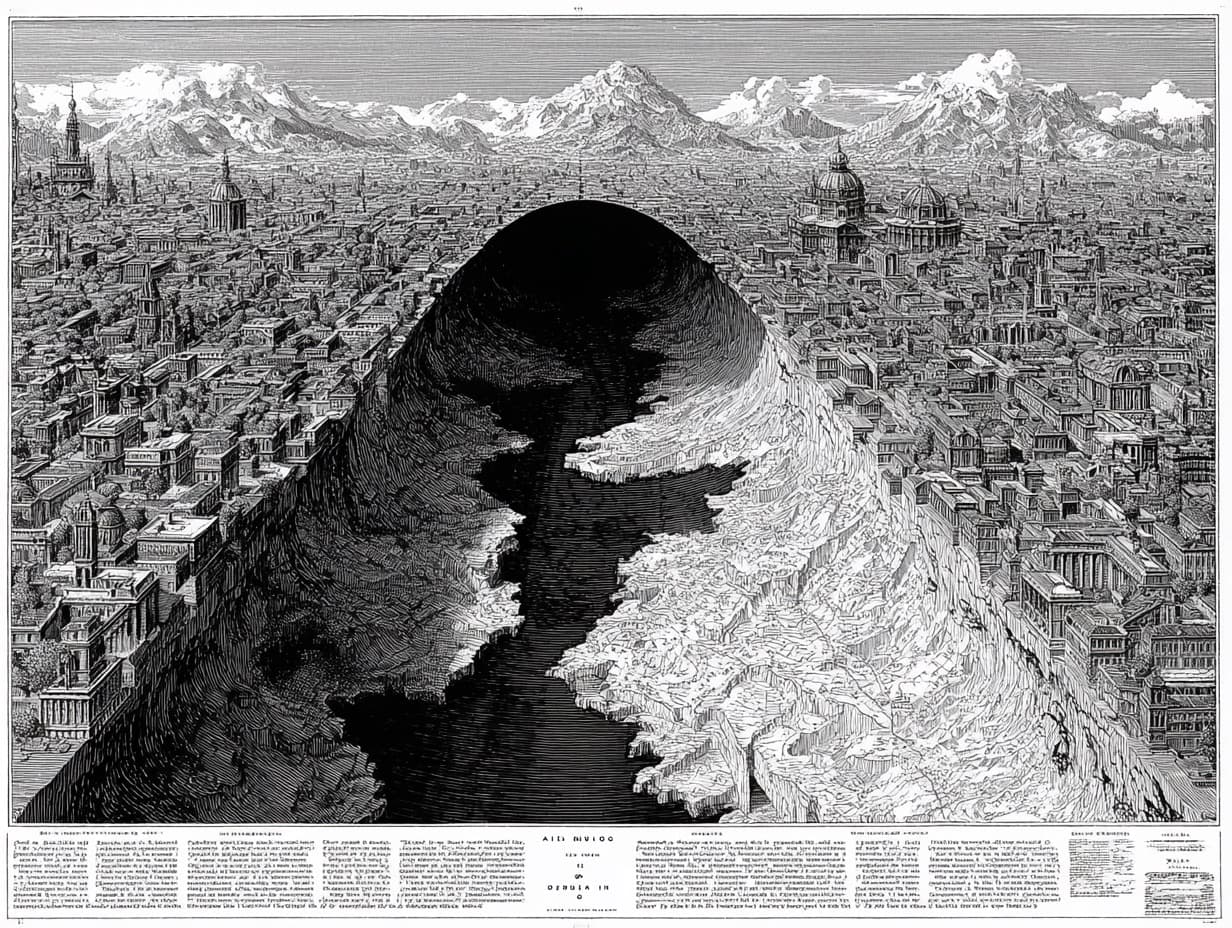

Source: Vaibhav Trivedy

There's a solid piece on agent harness engineering doing the rounds this week. Worth reading if you're anywhere near agent infrastructure decisions. But it earns its keep in one paragraph near the end, and buries the lead badly enough that most readers will miss it.

The genuinely useful moment: post-training a model with a specific harness creates overfitting to that harness's assumptions. Opus 4.6 in an optimised third-party harness dramatically outperforms Opus 4.6 in Claude Code. The implication is that defaulting to a model's "native" environment — which is what most teams are quietly doing — isn't a neutral choice. It's a bet that the harness the lab trained against happens to be the right harness for your task. Often it isn't.

That's a concrete, testable, counterintuitive claim. It's the kind of thing that should change how a team scopes its next infrastructure decision. It deserves the introduction, not the penultimate section.

What the piece doesn't earn: the framing in the final paragraphs that harnesses do two different things — patch over model deficiencies and engineer systems that make any model more effective regardless of capability. The article sets up that tension and then dissolves it with a single reassuring sentence rather than resolving it. Which harness components are transitional scaffolding that models will absorb? Which are durable infrastructure? That's the only question that matters for anyone building today, and the piece walks right up to it and turns around.

Read it for the overfitting argument. Don't let the tidy conclusion talk you out of the question it raises.

Stay current weekly

Get new commentary and weekly AI updates in the AI Primer Briefing.